On the road to Hamburg for ISC'24

I have the great fortune of being able to attend to the 2024 ISC High Performance conference in Hamburg next week where I will be both speaking and listening throughout the contributed program and the workshop day.

I'm going in with a few goals in mind:

Connect with the international HPC community

Engaging with the HPC community--whether reconnecting with former collaborators and friends, talking to strangers on the exhibit floor, or just posting commentary on Twitter--is the single biggest reason I attend ISC and SC. ISC in particular is a great conference because it is truly international, and it puts European and Asian HPC progress front-and-center. It's a smaller, more focused event that feels like a distilled version of SC. All the big names in the field will be there, and because the conference is smaller, it's easier to find some time to actually meet them. To that end, I'll likely spend a lot of time floating around and catching up with people.

Support the business

I'll also be providing technical input to customer meetings and hanging around the Microsoft booth (F30) to talk to anyone who wants to talk about HPC or AI infrastructure. I work on the AI engineering team that supports the 560 PF Eagle supercomputer in Azure, and my role will be to sound smart and have answers to questions around Azure's leadership supercomputing capabilities to this end. No, it doesn't run Windows. Yes, it's all one big InfiniBand fabric. Come say hi.

Challenge conventional thinking

The world of HPC is rapidly evolving beyond the traditional case where a giant cluster with a parallel file system sits in a walled garden inside some large government facility. The AI industry now leads in scale, budget, and pace of deployment, and significant innovation is being led by startups and hyperscalers rather than professors and public servants. I don't think the HPC community really grasps how quickly this shift happened, so I'll be giving a few short talks that illustrate how old conventions no longer hold. More on that below.

Where I'll be

There are a few events I signed up to help with this year, so I can guarantee I'll be at the following sessions.

ISC'24 Research Paper Session: Power, Energy, and Performance

- Date: Wednesday, May 15 at 10:45am

- More info: Research Paper Session: Power, Energy and Performance (swapcard.com)

I volunteered to chair this session of the technical program where three papers will be presented on topics related to increasing the efficiency of high-performance computing workloads:

- Power Consumption Trends in Supercomputers: A Study of NERSC's Cori and Perlmutter Machines, by Ermal Rrapaj et al. This paper was authored by my former group at NERSC based on power telemetry from systems that I helped design and optimize.

- EcoFreq: Compute with Cheaper, Cleaner Energy via Carbon-aware Power Scaling, by Kozlov et al. This paper is presenting a framework for scheduling workloads to run at times when energy is greener (e.g., when the sun is shining, or the wind is blowing) to increase the carbon impact of these jobs. Paying attention to the energy mix available when a job is running is becoming increasingly important in my own work, so I'm looking forward to hearing how this is being thought about in Europe.

- BlueField: Accelerating Large-Message Blocking and Nonblocking Collective Operations, by Rich Graham et al. This paper details how the programmable offload capabilities of NVIDIA's BlueField smart NIC can be used to accelerate some collectives. Although not the focus of their paper, offloading computation to lower-power, purpose-built devices such as smart NICs can decrease the overall energy cost of running a workload. This paper is also stacked with notable authors.

Artificial Intelligence and Machine Learning for HPC Workload Analysis BOF

- Date: Wednesday, May 15 at 1:00pm

- More info: Artificial Intelligence and Machine Learning for HPC Workload Analysis (swapcard.com)

I will be presenting a talk titled "AI and ML for workload analysis in the public cloud" at this BOF. I want to present two halves to HPC workload analysis in the cloud environment:

- the traditional perspective, where you profile nodes and applications to understand what they are doing, and

- the cloud perspective, where trying to observe what an application is doing is a violation of customer privacy.

I'll show how Azure offers powerful ML tools to make #1 easy, then talk about some of the challenges we face with #2 and how we address them to ensure that we know just enough about our customers' workloads to ensure we can continue to deliver new products that work well for their workloads and preserve the safety of our infrastructure at scale.

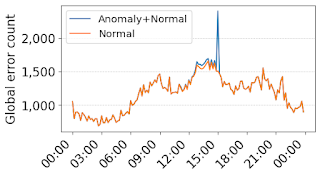

As is often the case when I give short talks at conferences, I am not an expert in this topic and I had to convince myself that what I was saying actually made sense. To that end, I had to figure out how some of the built-in machine learning methods in Azure's telemetry database service worked and convince myself that it was useful by making the following plot:

It's from a tutorial that shows how, using an aggregation query and a builtin timeseries segmentation function, you can find count error generation rates over time and find common features across all of them. I think the second half of the talk will be more interesting since many folks in HPC probably don't think about privacy, but I felt I had to include at least something technical (like a plot that I made!).

HPC I/O in the Data Center Workshop

- Thursday, May 16 at 11:30am

- More info: HPC-IODC: HPC I/O in the Data Center Workshop [HPS] (vi4io.org)

I will be co-presenting an expert talk title "Debunking the I/O Myths of Large Language Model Training" along with two of my esteemed colleagues from VAST Data, Kartik and Sven Breuner. This talk resulted from a conversation that Kartik and I had after he wrote an insightful blog post on how the I/O demands of training large language models are extremely overblown. I gave a presentation to this effect at the HDF5 BOF at SC'23 last year, and Kartik did one better by developing a beautiful model that allows you to calculate exactly how much I/O performance you need to train a large-language model like Llama or GPT.

I liked his model so much that I thought we should share it with the world since I had built a spiritually similar model for sizing all-flash Lustre file systems and presented it at HPC-IODC a few years ago. Kartik and Sven will present the model, and I will provide some perspective on how the most demanding I/O patterns of LLM training can be further optimized by using more sophisticated hierarchical checkpointing.

Workshop on Interactive and Urgent High-Performance Computing

- Date: Thursday, May 16 at 5:10pm

- More info: InteractiveHPC

I was invited to present a lightning talk about how Azure can support interactive and urgent high-performance computing workloads. Rather than presenting the same old boring "you can burst to the cloud if you need compute nodes for interactive or urgent computing" story, I decided to defy expectation and give a talk titled "Memes to meteors: how generating jokes prepares us for urgent scientific computing." I'll toss aside the myth that the cloud is limitless, and instead focus on the interesting convergence I'm seeing between two unexpected forms of computation: scalable web service delivery and tightly coupled, high-performance computing.

The marriage of these two worlds allows scientists to run workflows that use genuine HPC resources (like InfiniBand-connected compute nodes) but get the same level of critical infrastructure support that businesses rely on for their most time-critical, throughput-sensitive, urgent workloads like supporting Black Friday sale transactions or serving up ChatGPT responses. This talk is either going to be really interesting or sound like corporate shilling masquerading as an imperfect comparison.

To eat my own dogfood, I used LLM inferencing to help me make the talk. In addition to structuring and pacing the presentation, it suggested this meme:

Its quality (or lack of quality!) helps drive home the point that there's a lot of fancy infrastructure to orchestrate some really big GPU systems to accomplish dumb things, but scientists can ride the wave of services built on the backs of AI to solve societally meaningful, urgent HPC problems.

Other sessions of note

Here are a few other sessions that caught my eye as I built out my agenda.

Monday: Keynotes and Exhibitors!

The opening keynote will be delivered by Kathy Yelick at 9:15am. She always gives fast, deeply insightful talks, and I'm particularly looking forward to hearing her perspective on "disruptions in the computing marketplace, which include supply chain limitations, a shrinking set of system integrators, and the growing influence of cloud providers." I've now been on both sides of this equation--both the victim (as a buyer of HPC) and the cause (now seeing where all that supply chain and system integration expertise has gone)--and I'm curious if her perspective will match up with my lived experience.

Aside from that, there are a number of other interesting sessions on the schedule:

- The DAOS Foundation is hosting an in-person meeting at the conference at 10:30am Although not part of the official conference program, it is in the conference hall and will likely be a who's-who of luminaries in the high-performance storage industry. It will also be the first major meeting since Intel spun off DAOS into the hands of an independent foundation late last year.

- The TOP500 presentation starts at 11:20, and we'll get to see if Aurora overtakes Frontier as #1. Sadly, this overlaps with the DAOS Foundation meeting, so HPC storage folks will have a hard choice to make.

- The afternoon will have an assortment of fun activities including dueling Vendor Showdowns hosted by the Intersect360 folks, Dan Olds and Addison Snell. In terms of mixing technology with entertainment, it's hard to beat these two sessions.

- My old team at NERSC won the ISC 2024 Hans Meuer Award this year, and the award ceremony and talk will be presented at 4:30pm. The paper is on modeling how much classical computing is required to do the same thing as a quantum computer, and it's particularly notable that all of the authors are from NERSC (an HPC service provider), not members of a CS research organization.

- Following that, Rick Stevens is giving a presentation that sounds pretty visionary called "Building frontier AI systems for science and the path to zettascale" at 6:05pm. I'd be lying if I said I'd attend this to do anything except chuckle quietly at how crazy it all sounds from the perspective of someone who works in the sphere of building frontier AI systems on the path to zettascale in industry.

- The illustrious Dan Ernst is making his ISC debut at 6:20pm where he'll be presenting on the architectural intersections of HPC and AI. He's a really sharp and insightful guy, so I'm sure this will be a good talk.

Finally, the ISC exhibit hall opens at 6:30pm and there's guaranteed to be a lot of energy (and free swag). I'll probably be alternating between hanging around the Microsoft booth and taking in the diversity of booths.

Tuesday: Storage, cloud, and AI: my three favorite things

Tuesday is packed with interesting sessions that touch on three of my favorite topics these days: storage, cloud, and AI.

- There's a session called "Synergistically integrating HPC and cloud" at 9:00am, and the title alone suggests it might be crazy. Sadly, I have a private meeting that conflicts so I hope someone else will take notes.

- The IO500 BOF is at 10:05am, and although I no longer work in storage, it's a really good community event in which a lot of people who deeply care about HPC I/O gather.

- There's a BOF called "AI service centers: pioneering AI research and infrastructure in Germany" at 11:10am which sounds interesting. I'm always curious to see how traditional HPC communities are trying to position themselves in the AI space, and I'm particularly interested in hearing the German perspective since they don't have as outsized of a presence.

- The Lustre BOF is at 1:00pm. My colleague Brian Barbisch will be participating as a speaker and sharing his perspective as one of the technical leaders for Azure Managed Lustre. He's a sharp guy and will outline how Azure hides the complexity of creating and managing Lustre file systems behind a simple REST API.

- There's a BOF about AI accelerators for HPC applications at 2:15pm that sounds like it might be wacky. The abstract specifically cites Cerebras, Groq, SambaNova, Graphcore, and Gaudi--bespoke AI accelerators that haven't been able to put a dent in NVIDIA's dominance of the AI acceleration market. I'm dying to hear how the organizers position their investments in these accelerators over doing the obvious thing and just buying GPUs.

- Finally, there's a generic-sounding talk called "Large-scale applications towards Exascale computing" at 2:50pm that I initially overlooked. However, the speaker is Yutong Lu (former ISC 2019 chair and former director of NSCC, the site that hosted Tianhe-2) and the abstract suggests she might talk about some of those shadowy Chinese exascale systems.

Wednesday: Power, AI, and workforce development

The last day of the proper conference has a number of interesting sessions revolving around a few topics that I follow. In addition to the sessions at which I have speaking roles, the following caught my eye:

- A session called "Generative AI for science" starts at 9:00am. I initially assumed this would be another case of researchers trying to wedge LLMs into solving problems that don't require using LLMs, and maybe it is. But this session features European researchers exclusively, and I'm curious to see if their approaches are any more realistic than the proofs-of-concept work in this space that often show up at SC.

- There's a session on "developing a sustainable future for HPC and RSE skills" at 10:05am; while it looks very academically leaning, I'm wondering if it's fertile ground to furtively identify prospective recruits. It turns out that optimizing junky gradware is great training for optimizing distributed training applications.

- I'll be chairing a research paper session titled "Power, Energy, and Performance" at 10:45am which I detailed above.

- I'll also be presenting a lightning talk at the BOF on "Artificial Intelligence and Machine Learning for HPC Workload Analysis" at 1:00pm.

- Also at 1:00pm is a paper presentation called Optimizing Metadata Exchange: Leveraging DAOS for ADIOS Metadata I/O and will be presented on Wednesday at 1:00pm. I was a reviewer for this paper, and it's worth checking out for anyone interested in DAOS. It's the first published study that shows that DAOS still suffers from some of the complexities of parallel file systems when used in certain ways.

- There's a BOF on "Sustainability, carbon-neutrality and procurement for HPC" at 2:30pm which I might attend. People certainly talk about the desire to minimize the environmental impact of HPC, but in my experience in industry, HPC procurements don't act upon this in practice. Rather than deploy HPC in locations with a high ratio of green energy, they just stick GPUs in their existing data centers and claim success. I'm interested to see if that story has changed.

- Also at 2:30pm is a BOF for students on "enjoying a career and community in HPC." Community is what drew me away from a career in materials science and into the world of HPC, so I'd like to do what I can to pass this along to the students of today.

- There's a BOF titled "Co-designing next generation supercomputing systems" at 4:00pm, and while the title sounded like something that has appeared on every ISC/SC program since the beginning of time. However, this session is organized by a bunch of high-profile folks from across the world, so I suspect this session will be a good way to take a pulse on co-design to tell if the story has evolved at all.

- The concluding keynote at 5:45pm will be the first time that Thomas Sterling is not doing his wacky end-of-conference. Instead, ISC is going with a fireside chat between Horst Simon, John Shalf, and Rosa Badia about the future of HPC hardware and software. The abstract sounds very broad, but something tells me the answer will be chiplets and designing custom silicon. I look forward to being proven wrong!

Thursday: Workshops

I'll be spending all of Thursday at the workshops which is usually my favorite day of the conference. If ISC is a distilled, low-fat version of the SC conference, the ISC workshops is a further distilled and concentrated day of technical content. Many of the high-profile members of the HPC community stick around for Thursday to give keynotes at the different workshops, and I've found that the smaller sessions sizes invite a lot more openness and dialogue. If there's ever a perfect day where an early-career attendee could accost a luminary in the field for an informal chat, it'd be the workshop day.

I'll be presenting at two workshops which I described earlier, but I'll see if I can stick my head in some others. There is always too much packed into the one day to sit in on everything I'm interested in.